Audio and machine learning: Gaël Richard’s award-winning project

Gaël Richard, a researcher in Information Processing at Télécom Paris, has been awarded an Advanced Grant from the European Research Council (ERC) for his project entitled HI-Audio. This initiative aims to develop hybrid approaches that combine signal processing with deep machine learning for the purpose of understanding and analyzing sound.

“Artificial intelligence now relies heavily on deep neural networks, which have a major shortcoming: they require very large databases for learning,” says Gaël Richard, a researcher in Information Processing at Télécom Paris. He believes that “using signal models, or physical sound propagation models, in a deep learning algorithm would reduce the amount of data needed for learning while still allowing for the high controllability of the algorithm.” Gaël Richard plans to pursue this breakthrough via his HI-Audio* project, which won an ERC Advanced Grant on April 26, 2022

For example, the integration of physical sound propagation models can improve the characterization and configuration of the types of sound analyzed and help to develop an automatic sound recognition system. “The applications for the methods developed in this project focus on the analysis of music signals and the recognition of sound scenes, which is the identification of the recording’s sound environment (outside, inside, airport) and all the sound sources present,” Gaël Richard explains.

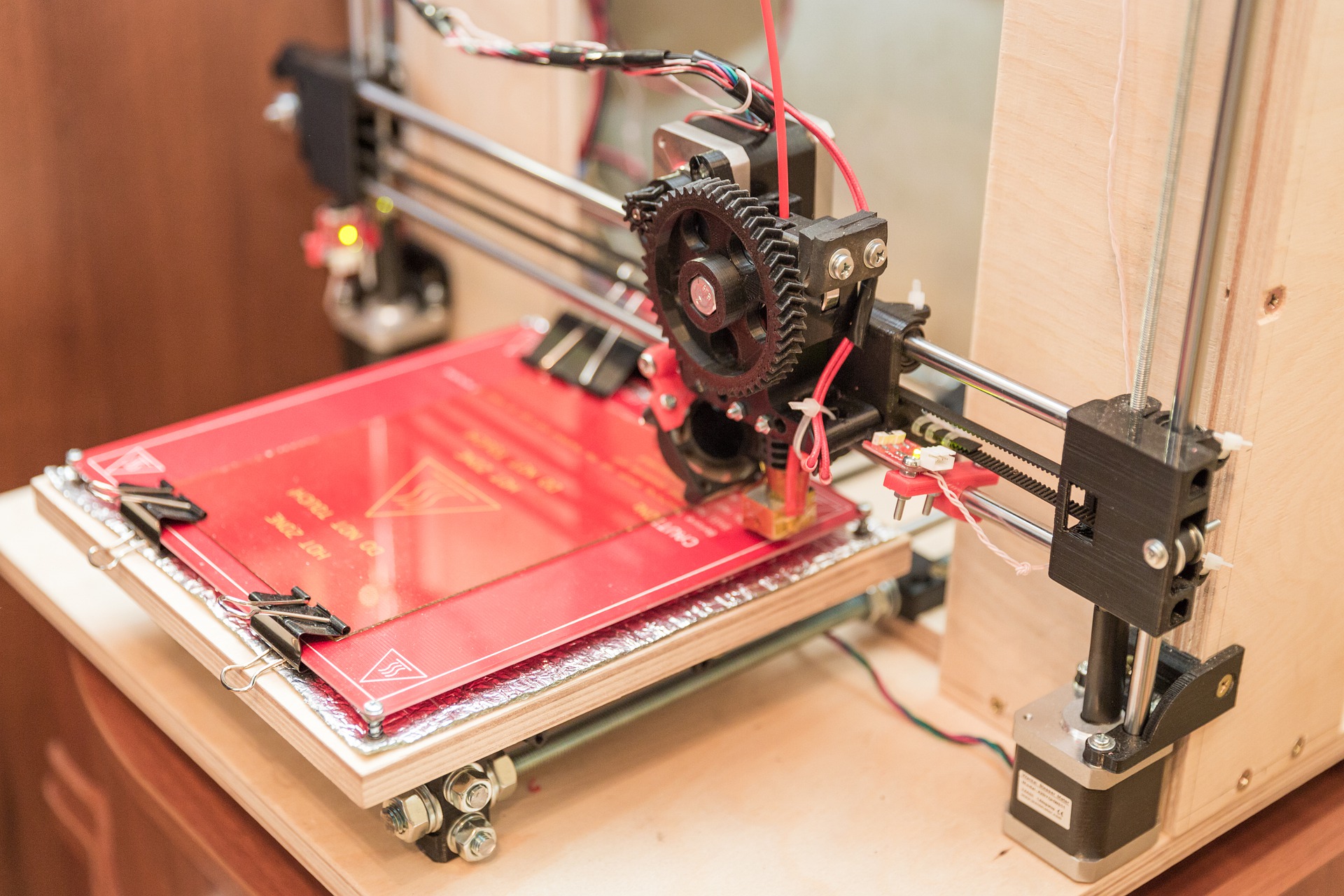

Industrial applications

Learning sound scenes could help autonomous cars identify their surroundings. The algorithm would be able to identify the surrounding sounds using microphones. The vehicle would be able to recognize the sound of a siren and its variations in sound intensity. Autonomous cars would then be able to change lanes to let an ambulance or fire engine pass, without having to “see” it in the detection cameras. The processes developed in the HI-Audio project could be applied to many other areas. The algorithms could be used in predictive maintenance to control the quality of parts in a production line. A car part, such as a bumper, is typically controlled based on the sound resonance generated when a non-destructive impact is applied.

The other key applications for the HI-Audio project are in the field of AI for music, particularly to assist musical creation by developing new interpretable methods for sound synthesis and transformation.

Machine learning and music

“One of the goals of this project is to build a database of music recordings from a wide variety of styles and different cultures,” Gaël Richard explains. “This database, which will be automatically annotated (with precise semantic information), will expand the research to include less studied or less distributed music, especially from audio streaming platforms,” he says. One of the challenges of this project is that of developing algorithms capable of recognizing the words and phrases spoken by the performers, retranscribing the music regardless of its recording location, and contributing new musical transformation capabilities (style transfer, rhythmic transformation, word changes).

“One important aspect of the project will also be the separation of sound sources,” Gaël Richard says. In an audio file, the separation of sources, which in the case of music are each linked to a different instrument, is generally achieved via filtering or “masking”. The idea is to hide all other sources until only the target source remains. One less common approach is to isolate the instrument via sound synthesis. This involves analyzing the music to characterize the sound source to be extracted in order to reproduce it. For Gaël Richard, “the advantage is that, in principle, artifacts from other sources are entirely absent. In addition, the synthesized source can be controlled by a few interpretable parameters, such as the fundamental frequency, which is directly related to the sound’s perceived pitch,” he says. “This type of approach opens up tremendous opportunities for sound manipulation and transformation, with real potential for developing new tools to assist music creation,” says Gaël Richard.

*HI-Audio will start on October 1st, 2022 and will be funded by the ERC Advanced Grant for five years for a total amount of €2.48 million.

Rémy Fauvel