Clicking, liking, sharing: all of our digital activities produce data. This information, which is collected and monetized by big digital information platforms, is on its way to becoming the virtual black gold of the 21st century. Have we all become digital workers? Digital labor specialist and Télécom ParisTech researcher Antonio Casilli has recently published a work entitled En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work). He sat down with us to shed some light on this exploitation 2.0.

Who we are, what we like, what we do, when and with whom: our virtual personal assistants and other digital contacts know everything about us. The digital space has become the new sphere of our private lives. This virtual social capital is the raw material for tech giants. The profitability of digital platforms like Facebook, Airbnb, Apple and Uber relies on the massive analysis of users’ data for advertising purposes. In his work entitled En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work), Antonio Casilli explores the emergence of surveillance capitalism, an opaque and invisible form of capitalism marking the advent of a new form of digital proletariat: digital labor – or working with our digits. From the click worker who performs microtasks, who is aware of and paid for his activity, to the user who produces data implicitly, the sociologist analyzes the hidden face of this work carried out outside the world of work, and the all too-tangible reality of this intangible economy.

Read on I’MTech What is digtal labor?

Antonio Casilli focuses particularly on net platforms’ ability to put their users to work, convinced that they are consumers more than producers. “Free access to certain digital services is merely an illusion. Each click fuels a vast advertising market and produces data which is mined to develop artificial intelligence. Every “like”, post, photo, comment and connection fulfils one condition: producing value. This digital labor is either very poorly paid or entirely unpaid, since no one receives compensation that measures up to the value produced. But it is work nevertheless: a source of value that is traced, measured, assessed and contractually-regulated by the platforms’ terms and conditions for use,” explains the sociologist.

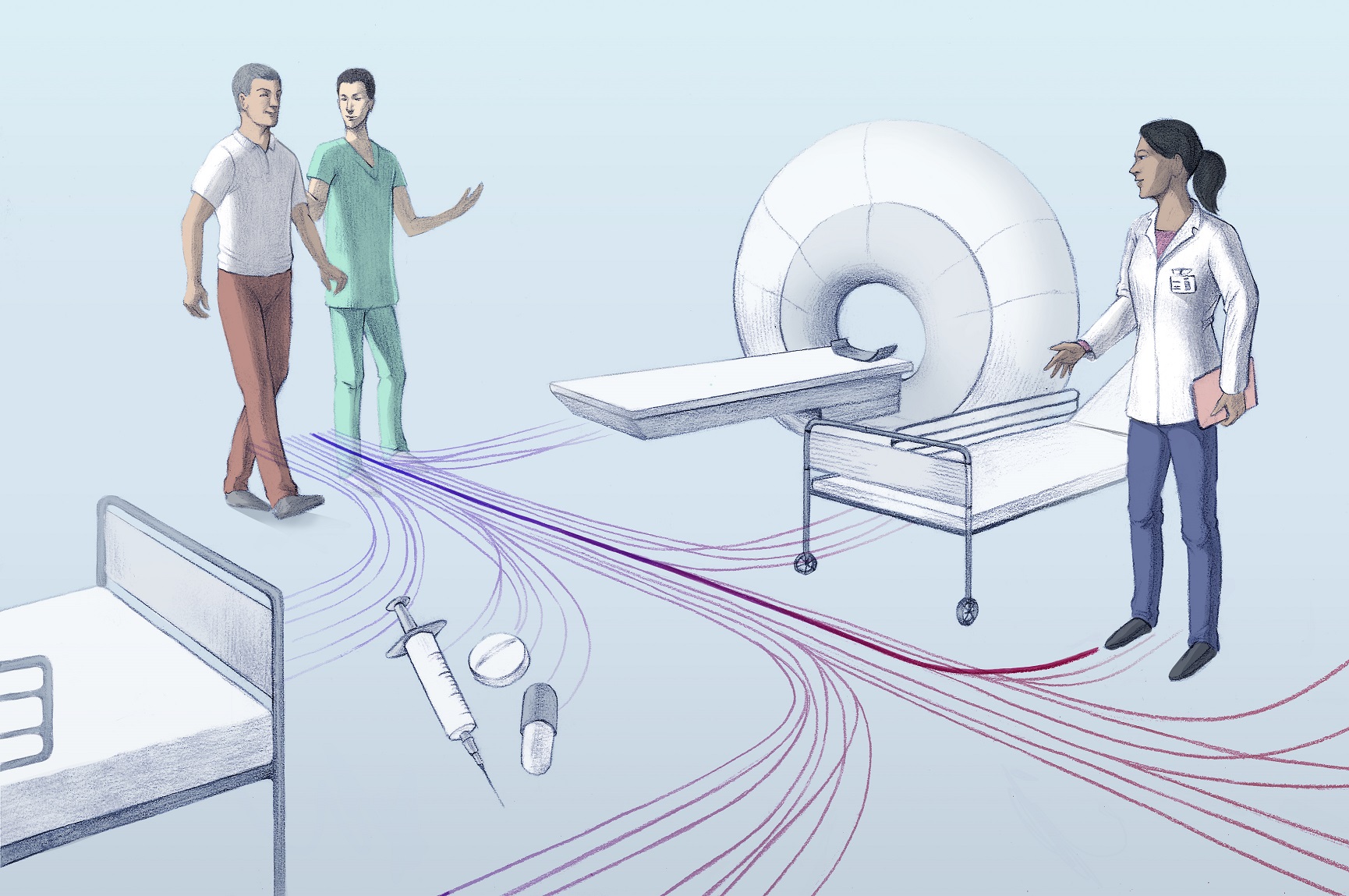

The hidden, human face of machine learning

For Antonio Casilli, digital labor is a new form of work which remains invisible, but is produced from our digital traces. Far from marking the disappearance of human labor with robots replacing the work they once did, this click work challenges the boundaries between work that is produced implicitly and formally recognizable employment. And for good reason: microworkers paid by the task or user-producers like ourselves are indispensable to these platforms. This data serves as the basis for machine learning models: behind the automation of a given task, such as visual or text recognition, humans are actually fueling applications by indicating clouds on images of the sky, for example, or by typing out words.

“As conventional wisdom would have it, these machines learn by themselves. But to train their algorithms to calibrate, or to improve their services, platforms need a huge number of people to train and test them,” says Antonio Casilli. One of the best-known examples is Mechanical Turk, a service offered by the American giant Amazon. Ironically, its name is a reference to a hoax that dates back to the 18th century. An automaton chess player, called the “Mechanical Turk” was able to win games against human opponents. But the Turk was actually operated by a real human hiding inside.

Likewise, certain so-called “smart” services rely heavily on unskilled workers: a sort of “artificial” artificial intelligence. In this work designed to benefit machines, digital workers are poorly paid to carry out micro-tasks. “Digital labor marks the appearance of a new way of working which can be called “taskified,” since human activity is reduced to a simple click; and “datafied” because it’s a matter of producing data so that digital platforms can obtain value from it,” explains Antonio Casilli. And this is how data can do harm. Alienation and exploitation: in addition to the internet task workers in northern countries, more commonly their counterparts in India, the Philippines and other developing countries with low average earnings, are sometimes paid less than one cent per click.

Legally regulating digital labor?

For now, these new forms of work are exempt from salary standards. Nevertheless, in recent years there has been an increasing number of class action suits against tech platforms to claim certain rights. Following the example of Uber drivers and Deliveroo delivery people, individuals have taken legal action in an attempt to have their commercial contracts reclassified as employment contacts. Antonio Casilli sees three possible ways to help combat job insecurity for digital workers and bring about social, economic and political recognition of digital labor.

“From Uber to platform moderators, traditional labor law—meaning reclassifying workers as salaried employees—could lead to the recognition of their status. But dependent employment may not be a “one-size-fits-all” solution. There are also a growing number of cooperative platforms being developed, where the users become owners of the means of production and algorithms.” Still, for Antonio Casilli, there are limitations to these advances. He sees a third possible solution. “When it comes to our data, we are not small-scale owners or small-scale entrepreneurs. We are small-scale data workers. And this personal data, which is neither private nor public, belongs to everyone and no one. Our privacy must be a collective bargaining tool. Institutions must still be invented and developed to make it into a real common asset. The internet is a new battleground,” says the researcher.

Toward taxation of the digital economy

Would this make our personal data less personal? “We all produce data. But this data is, in effect, a collective resource, which is appropriated and privatized by platforms. Instead of paying individuals for their data on a piecemeal basis, these platforms should return, give back, the value extracted from this data, to national or international authorities, through fair taxation,“ explains Antonio Casilli. In May of 2018, the General Data Protection Regulation (GDPR) came into effect in the European Union. Among other things, this text protects data as a personality attribute instead of as property. Therefore, in theory, everyone can now freely consent—at any moment—to the use of their personal data and withdraw this consent just as easily.

While in its current form, regulation involves a set of protective measures, setting up a tax system like the one put forward by Antonio Casilli would make it possible to establish an unconditional basic income. The very act of clicking or sharing information could give individuals a right to these royalties and allow each user to be paid for any content posted online. This income would not therefore be linked to the tasks carried out but would recognize the value created through these contributions. In 2020, over 20 billion devices will be connected to the Internet of Things. According to some estimates, the data market could reach nearly €430 billion per year by then, which is equivalent to a third of France’s GDP. Data is clearly a commodity unlike any other.

[divider style=”dotted” top=”20″ bottom=”20″]

En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work)

En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work)

Antonio A. Casilli

Éditions du Seuil, 2019

400 pages

24 € (paperback) – 16,99 € (e-book)

Original article in French written by Anne-Sophie Boutaud, for I’MTech.

En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work)

En attendant les robots, enquête sur le travail du clic (Waiting for Robots, an Inquiry into Click Work)